You sit down on a Sunday with three good stories, a rough opinion on what they mean, and forty minutes before dinner. You paste your notes into ChatGPT. You ask for a newsletter draft. You get back twelve hundred words of clean, structured, perfectly grammatical prose.

You read it once.

It is fine. It says the things. It moves between sections. It opens with a hook of the kind that opens things.

It closes with a takeaway of the kind that closes things. There is nothing technically wrong with it. There is also nothing in it that you would have written.

Something specific is missing, and you can feel it in your chest before you can name it. The take you would have taken. The detail you would have noticed.

The unexpected line you would have left in, even though it was risky. The pivot from one idea to another that only your mind would have made.

That is what happens when the model writes the parts of the work it never had access to. No prompt can put them back in.

Last week, I wrote about the voice contract your readers signed when they subscribed. The contract is the relationship. Today’s question is what specifically breaks the contract when a generic AI writes the issue.

Earlier this week, Cagri broke down newsletter source monitoring as five tasks pretending to be one. What happens after the curation step, when the writing itself goes through a generic model, is a parallel five-element loss. The two posts together cover both halves of the same weekly disappearance.

Why Does AI Writing All Sound the Same?

Because, structurally, it is the same.

In July 2024, Anil Doshi at UCL and Oliver Hauser at the University of Exeter published a paper in Science Advances that has quietly become essential reading for any creator using AI to write. Their study randomized hundreds of writers into three groups and asked them to write a short story. One group got no AI assistance. The other two could request one or five AI generated story ideas before writing.

The findings cut both ways, and both directions matter for newsletters.

Stories written with AI assistance were rated as more creative, better written, and more enjoyable by independent evaluators. Especially among less skilled writers. The individual quality went up.

And the stories with AI assistance were measurably more similar to each other. Cosine similarity between stories rose by 10.7% with one AI idea and 8.9% with five AI ideas. Across writers, AI assistance produced convergence. The collective range of voices contracted.

Sit with that for a moment.

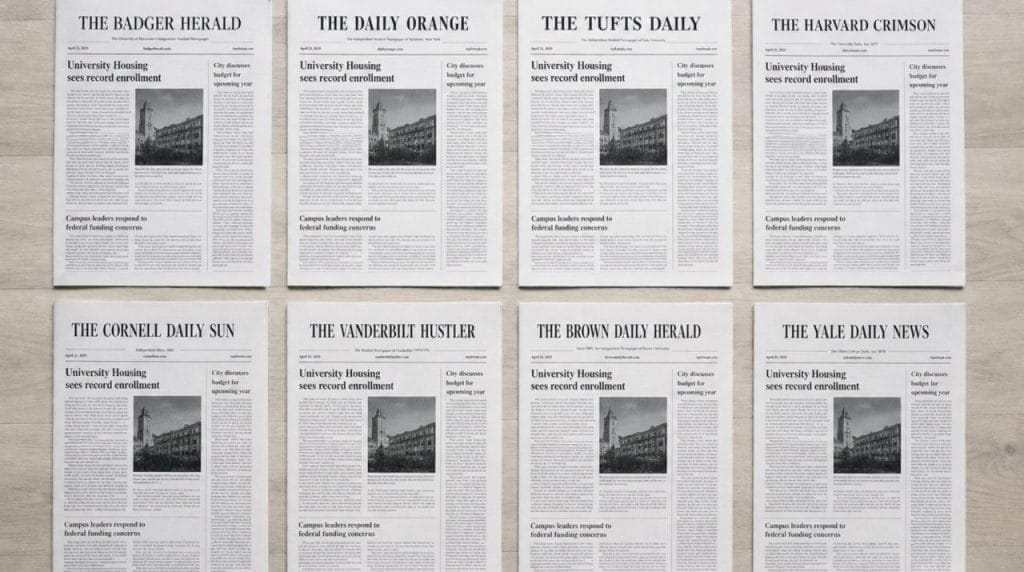

If every newsletter creator in your niche reaches for ChatGPT on Sunday night and pastes a similar prompt about a similar story, the inboxes of your shared readers fill up with newsletters that are individually competent and collectively interchangeable. The thing your subscribers cannot get anywhere else, your specific perspective, gets quietly diluted across the whole category.

A second study by Vishakh Padmakumar and He He at NYU pinpointed the mechanism. They compared writers using a baseline GPT 3 model to writers using the instruction tuned variant, the kind of model optimized for safe, helpful, polished output. That tuning is what sits inside almost every general purpose AI writing tool you have used.

Their finding was sharp. The tuned model produced statistically less diverse essays at both the word level and the content level. Writers using it converged on identical multi-word phrases like “keep up with the news” or “students should learn in school,” even when they were writing about completely different topics.

The safer the model, the more it pulls every writer toward the same statistical center.

Generic AI writing assistants behave less like a creative collaborator and more like an editor who has read every newsletter ever written and quietly defaults to the average of all of them.

What Does AI Writing Miss? Five Specific Erasures

The convergence research describes the macro phenomenon. Inside any one issue, the loss takes a specific shape. Five elements get filed off the work, and they are the elements your readers came for.

1. Your Stance

You read a story. Inside thirty seconds, your editorial mind has formed a position. You think the framing is wrong.

You think one source is more credible than another. You think the obvious takeaway is shallow and the second order takeaway is what actually matters.

Generic AI hedges. It writes “this raises questions about” where you would have written, “this is wrong.” It writes “some critics argue that” where you would have written “this is the dumbest move I have seen this quarter.” AI systems are explicitly trained to avoid making definitive claims, producing the hedge phrases (“may potentially,” “could possibly,” “appears to indicate”) that drain the editorial spine out of any draft.

Newsletters live on the editorial spine. The reason a reader chose your newsletter over a Google News alert is that they wanted somebody else to take a position on what mattered this week. When the AI writes the position out of the issue, what arrives in the inbox is closer to a press digest than a publication.

2. The Specific Detail

Your draft contains a memory from a conference where someone said something specific. It contains a number you double checked because you suspected the round version was wrong. It contains the name of a small newsletter you read, but most people have not heard of. It contains the exact sentence a subscriber emailed you last week.

Generic AI has none of those things. It has the average of every newsletter on this topic, which means it defaults to the most common, the most generic, the most safely sourced.

The specific detail vanishes. You get the round number where you wanted the precise one. You get “industry experts say” where you wanted the named person. You get the textbook citation where you wanted the unexpected reference.

Research on AI generated academic writing found that more than half of AI produced feedback to student writers lacked concrete examples, defaulting to recycled rubric language. Specifics are how readers know you read the article and did not just ask a model to summarize it. They are also where most of the trust lives.

3. The Unexpected Pivot

Halfway through your usual draft, you make a leap. You pivot from the news of the week to a podcast you listened to on a walk. You connect a piece of newsletter industry data to something you read about restaurant economics.

You bring up your grandmother. The pivot lands because the connection is your connection, and not the most predictable one.

Generic AI optimizes for the predictable connection. It is, by design, a probability machine. It strings together the most likely next idea given the previous idea, and the most likely next idea is the one a thousand other writers have already used.

The Cornell study by Dhruv Agarwal, Mor Naaman, and Aditya Vashistha showed this with writers from different cultures. When 118 participants from India and the United States wrote about local foods, holidays, and celebrities with and without AI suggestions, the AI pulled both groups toward Western defaults. Indian writers got smaller productivity gains than American writers, because they had to keep correcting the AI’s predictable, culturally homogenized suggestions.

What gets erased in the pivot is the surprise, and also the evidence of you reading widely and connecting things only you read.

4. The Cultural and Personal Specificity

Your readers know that you live somewhere specific. You write from a particular city, in a particular industry, with a particular background. The references that pepper your issues are evidence of that location.

A model trained on the statistical average of the English language internet does not have your location. It has San Francisco. It has Brooklyn. It has the specific upper-middle American professional voice that dominates the training corpus.

The Cornell research above showed that this homogenization is structural. Better prompts do not fix it.

For a creator whose newsletter draws subscribers because of a specific cultural lens (a regional industry, a context outside the United States, a working class voice in a white collar topic), the erasure is sharper. The thing that made the newsletter worth subscribing to is precisely what the model is built to smooth out.

5. The Chosen Risk

There is one sentence per issue that you almost cut. The one where you said something a little harder than you usually do. The one where you criticized somebody by name. The one where you admitted a personal mistake.

The one where the joke was a bit much. You left it in because you knew it was the line your readers would screenshot.

Generic AI cuts that sentence by default. It is trained to be safe, balanced, and broadly acceptable. It writes the world in a register that will not trigger an unsubscribe and will also not trigger a forward.

The Chakrabarty study at Columbia tested LLM generated short stories against professional human stories using the Torrance Tests of Creative Writing, a fourteen test framework grounded in decades of creativity research. LLM generated stories passed three to ten times fewer of the tests than professional human stories. Expert evaluators described the AI output with words like “hackneyed,” “cliched,” and “random.”

Risk taking is the cost of admission for any newsletter that wants its subscribers to keep choosing it on a Tuesday morning. When the model writes the risk out, it also writes out the reason anybody is reading.

Can Readers Tell If a Newsletter Was Written by AI?

The honest answer is that they do not have to.

I covered the detection research in detail last week, in the post on the voice contract. Controlled tests find readers identify AI text only about half the time.

The newsletter inbox runs on a different test. The reader is comparing your issue to the fifty issues of you they already have in memory. The benchmark is recognition.

What is new this year is that the cultural climate has caught up with the suspicion. In January 2026, the American Dialect Society named slop the 2025 Word of the Year. Merriam-Webster did the same a few weeks earlier. The Economist had already chosen it. Three independent linguistic authorities, in a single year, picked the word that means low-quality digital content produced by AI.

This is a cultural shift you can feel in the inbox. Brandwatch’s social listening report tracked a 200% rise in mentions of slop across 2025, with 82% of sentiment coded mentions trending negative. The audience your newsletter is competing for has spent a year learning a vocabulary for naming and rejecting what AI writing feels like.

The Society of Authors in the UK ran a survey of 787 working writers in early 2024. More than 80% said they were concerned that generative AI would mimic their style, voice, and likeness. Another 86% said they were concerned AI would devalue human made creative work.

The professionals who write for a living have moved past asking whether AI can imitate them. Your subscribers may not have the survey data, but they have the feeling.

Why Does AI Writing Pile Up Tells, Even Across Writers?

Because the model has lexical habits, the way a person has accents.

A 2025 study published in PubMed analyzed 135 vocabulary terms suspected of being AI influenced and tracked their frequency in medical literature from 2000 to 2024. One hundred and three of the 135 showed meaningful increases in 2024 alone. The steepest jumps were instantly recognizable to any editor reading a lot of AI output: delve, underscore, primarily, meticulous, boast.

The same researchers noted something else worth pausing on. The pattern of usage across thousands of papers from different authors was suspiciously uniform. When a model has a vocabulary preference, every writer using it inherits the same fingerprint.

The reader who flagged your issue last week as “feeling AI” was reacting to more than your draft alone. They were reacting to the cumulative experience of reading hundreds of AI touched newsletters all reaching for the same shortlist of words. When that vocabulary quietly replaces yours, the substitution is subtle on any one Tuesday and unmistakable across ten of them.

A newsletter is a relationship measured in vocabulary you do not normally notice. Generic AI changes the vocabulary first.

What Gets Lost Is Editorial Substance

This is the part that makes the problem confusing for most creators.

The conversation about AI and writing tends to get stuck on voice, which is a fair concern and one I have written about. Voice is the medium through which substance arrives. The thing that actually gets lost when a generic model writes the issue is the editorial substance the voice was carrying.

Editorial substance is the position you took, the detail you noticed, the connection you made, the lens you used, and the risk you accepted. Voice is how all of that sounds when you write it. A model can mimic the surface of a voice with enough prompting and still arrive at a draft empty of substance. Writers worry their voice will be replicated, while the more pressing problem is that the substance their voice was built to deliver is what the generic model never had access to.

Your readers are subscribed to the specific things you have been right about, weird about, brave about, and observant about, said in your way of saying them. Voice in the abstract was never the contract. The voice is the wrapper. The substance is the gift.

Generic AI is good at wrapping. It is bad at gifting.

How Do I Know What My Issues Are Losing? The Erasure Test

You do not need a tool to find out whether your issues have started losing editorial substance. You need an hour with a printer and a red pen.

This is a different exercise from the voice audit I gave you last week. The voice audit measures whether your sentences still sound like the same person. The Erasure Test measures whether they still carry the editorial substance your readers came for.

Run both. They diagnose different organs of the same body.

Print your last issue. The one you sent on the day you were tired. Sit down with three coloured pens.

Pass 1: The Stance Pass. Take a red pen. Underline every sentence in the issue where you take a position on something. Where you describe a position does not count. Where you take one does. “X is wrong about Y because Z” counts. “Some have argued that X is wrong about Y” does not.

Count your underlines. A healthy issue has between five and fifteen, depending on length. An issue that has slid into AI style hedging will have one or two, often near the top, with a long descriptive middle that contains none.

Pass 2: The Specificity Pass. Take a blue pen. Circle every named person, named place, specific number that is not a round one, named source, and concrete sensory detail. The exact sentence a reader emailed you. The brand of coffee you mentioned in passing. The specific minute of the podcast.

Count your circles. Most healthy newsletter issues have between fifteen and forty named specifics across two thousand words. An AI drifted issue will have five or fewer, almost all at the start, with a long abstract middle.

Pass 3: The Risk Pass. Take a green pen. Circle every sentence you almost cut. The line pushed harder than usual. The aside you debated removing. The joke was a bit much. The opinion you suspected your most conservative reader would not love.

Count your circles. The healthy range is one to three per issue. An AI drifted issue will have zero, because the model has already made the cut for you.

Now compare your three color counts to the issue you wrote two years ago, before you started reaching for the model on tired weeks. Most creators find the older issue has roughly twice the marks across all three passes. That gap is what your readers have been noticing slowly, the way you notice your own knees getting older.

The Erasure Test takes about an hour per issue. The information it produces is the actual diagnosis for whether AI is helping you or quietly thinning your work.

What Should AI Actually Do for a Newsletter Creator?

The answer is to write the parts that came from you, using inputs that contain you.

Generic AI fails newsletter writers because it has never read them. It has read everyone, in aggregate, and at the moment of generation, it produces the statistical average of everyone. The substance erasure is downstream of the input erasure. If the model never knew your stance on this topic, your specific archive of references, your particular pivots, or the risks you have historically taken, it cannot put any of that back into a draft, no matter how thorough the prompt.

This is the design problem HeyNews was built to solve. Your archive already contains every position you have taken, every specific you have noticed, every connection you have made, and every risk you have accepted.

The AI Writer reads from your archive specifically, and not from the average of everyone. The editorial substance it draws on is the substance you have laid down across every issue you have ever published. The wrapper sounds like you because the gift inside is yours.

You still hold final editorial control. The model surfaces a draft built from your patterns. You decide what stays, what cuts, what sharpens. The mechanical labor moves off your plate while the substance stays where it always belonged.

That distinction matters more this year than last. A draft that sounds like the statistical average of the internet now reads, to a 2026 audience, as the thing they are actively learning to filter out. A draft that sounds like the next issue you would have written reads exactly what they subscribed for.

It All Comes Down To…

- Generic AI writing creates measurable homogenization across writers. Doshi and Hauser’s 2024 Science Advances study showed that cosine similarity between stories rose by up to 10.7% when writers shared access to AI ideas, and Padmakumar and He showed the effect lives at both the word level and the content level.

- Five specific elements get erased when AI writes a newsletter draft: your stance, the specific detail, the unexpected pivot, cultural and personal specificity, and the chosen risk. Each one is a place readers were trusting you to deliver something only you could.

- The cultural climate of 2025 codified the rejection. Slop was named the 2025 Word of the Year by both the American Dialect Society and Merriam-Webster, with 82% of social mentions trending negative. Your readers now have a vocabulary for the feeling AI writing produces.

- The thing readers actually pay for is editorial substance. The voice is the wrapper. The substance, the position you took, the detail you noticed, the connection you made, is the gift.

- You can run the Erasure Test on your last issue this week with three coloured pens. Pass 1 counts your stances. Pass 2 counts your specifics. Pass 3 counts your risks. The combined density tells you whether your issues are still carrying the substance your readers came for.

A newsletter is a contract between one mind and the readers who choose it. The terms are the things only you would notice, the positions only you would take, and the way only you would say them.

Generic AI cannot keep that contract on your behalf, because the model never met you. It met the average of everybody.

The work is to keep the AI from writing the gift. Keep it for the wrapping if you want. The gift has to come from your archive.

See what editorial intelligence looks like when your archive trains the writer.